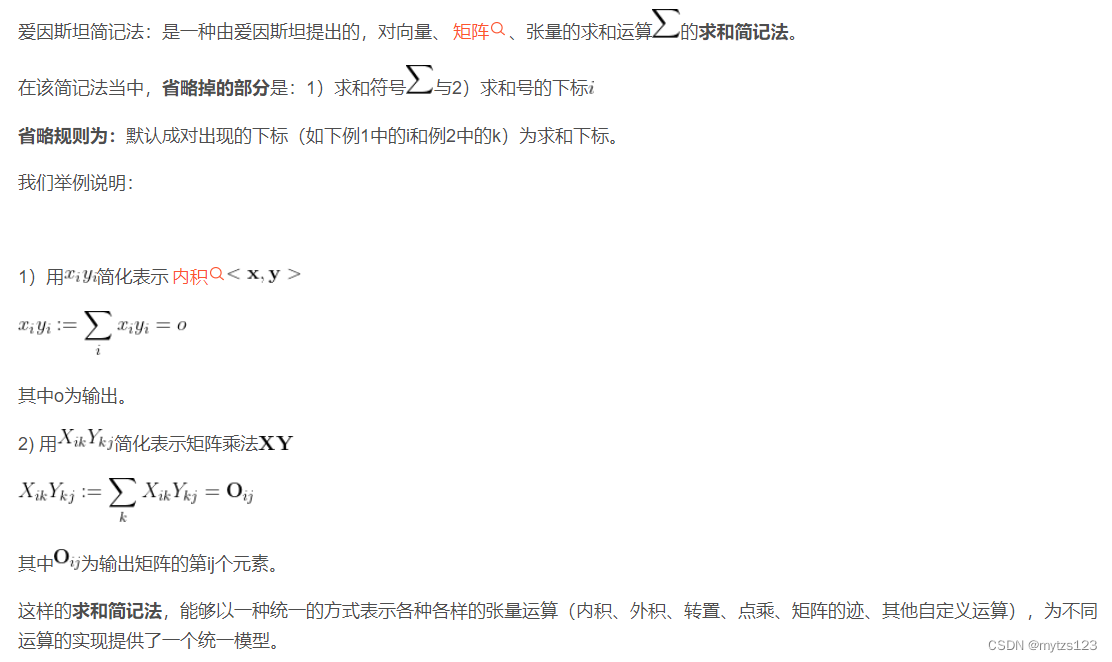

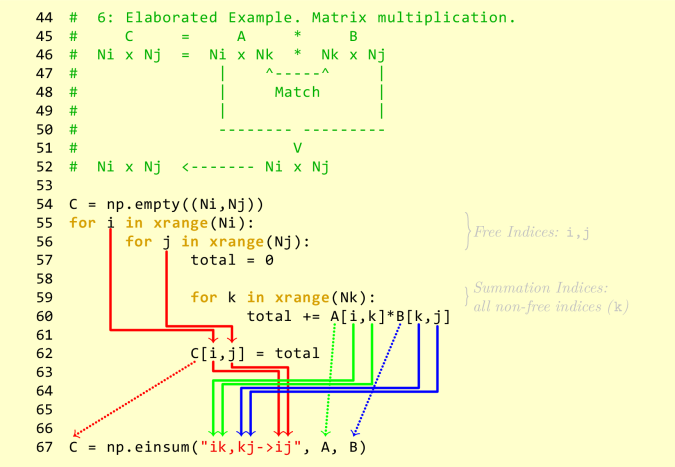

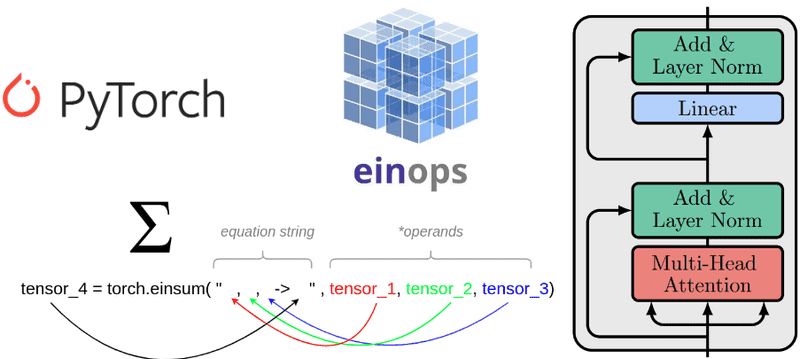

Tim Dettmers on Twitter: "I am a huge fan of einsum notation. Here is a multi-layer transformer in a couple lines of code (without norms though). I think it's simple to read,

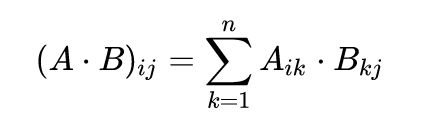

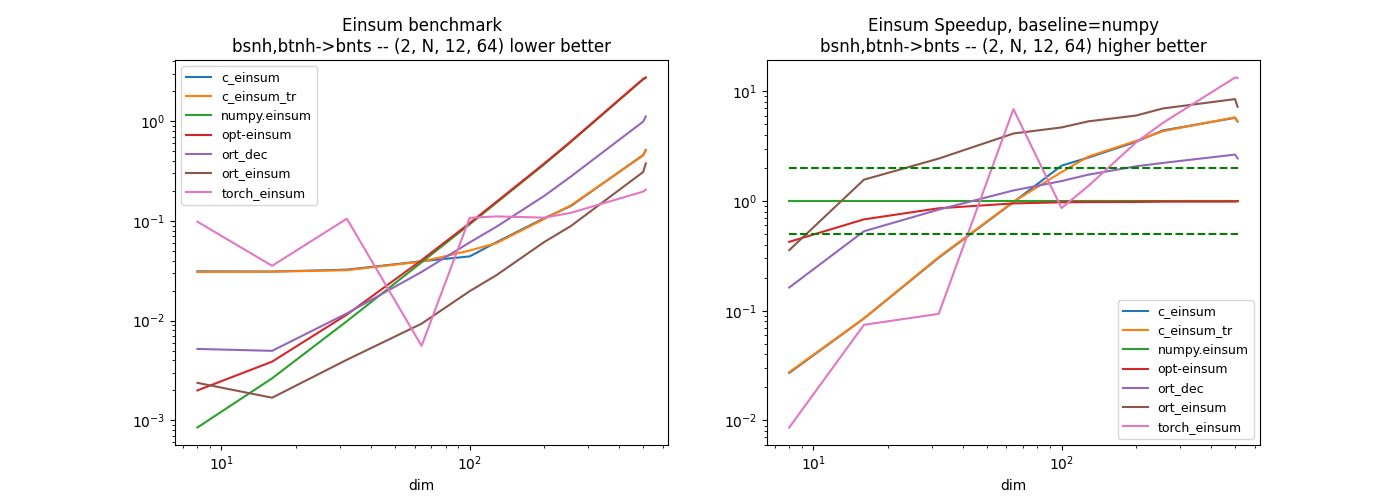

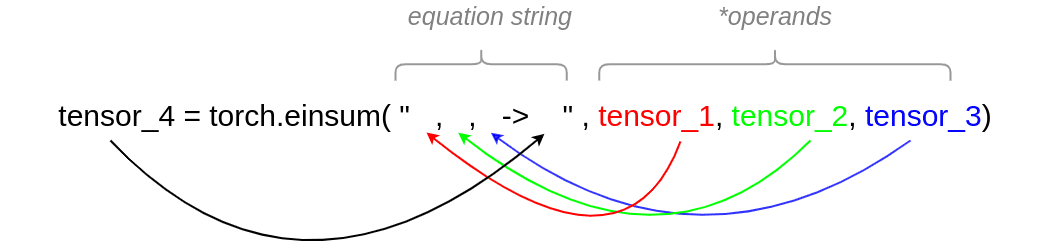

Understanding einsum for Deep learning: implement a transformer with multi-head self-attention from scratch | AI Summer

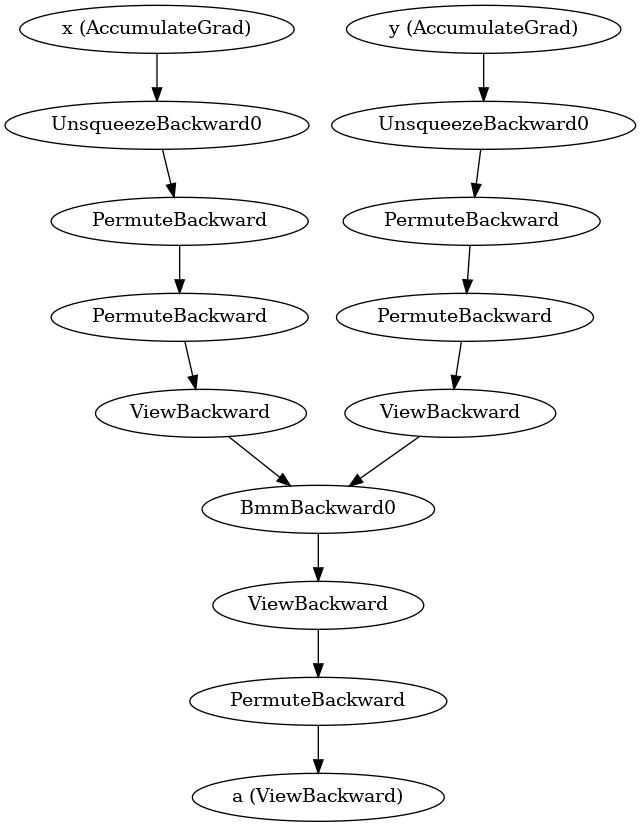

Birchlabs on Twitter: "made #stablediffusion 19% faster on Mac by replacing einsum(…, q, k)*scale with baddbmm(…), and einsum(…, attn, v) with bmm(…). baddbmm is 99% faster than the einsum+multiply. bmm is 15%

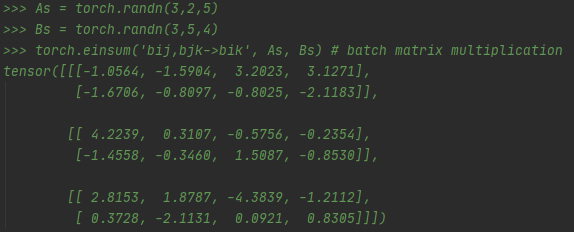

![MPS] einsum returns incorrect matmul result on first invocation on nightly builds · Issue #85224 · pytorch/pytorch · GitHub MPS] einsum returns incorrect matmul result on first invocation on nightly builds · Issue #85224 · pytorch/pytorch · GitHub](https://user-images.githubusercontent.com/6141784/190876454-297dff29-6261-4ace-b97a-5e3c2d55589a.png)

MPS] einsum returns incorrect matmul result on first invocation on nightly builds · Issue #85224 · pytorch/pytorch · GitHub

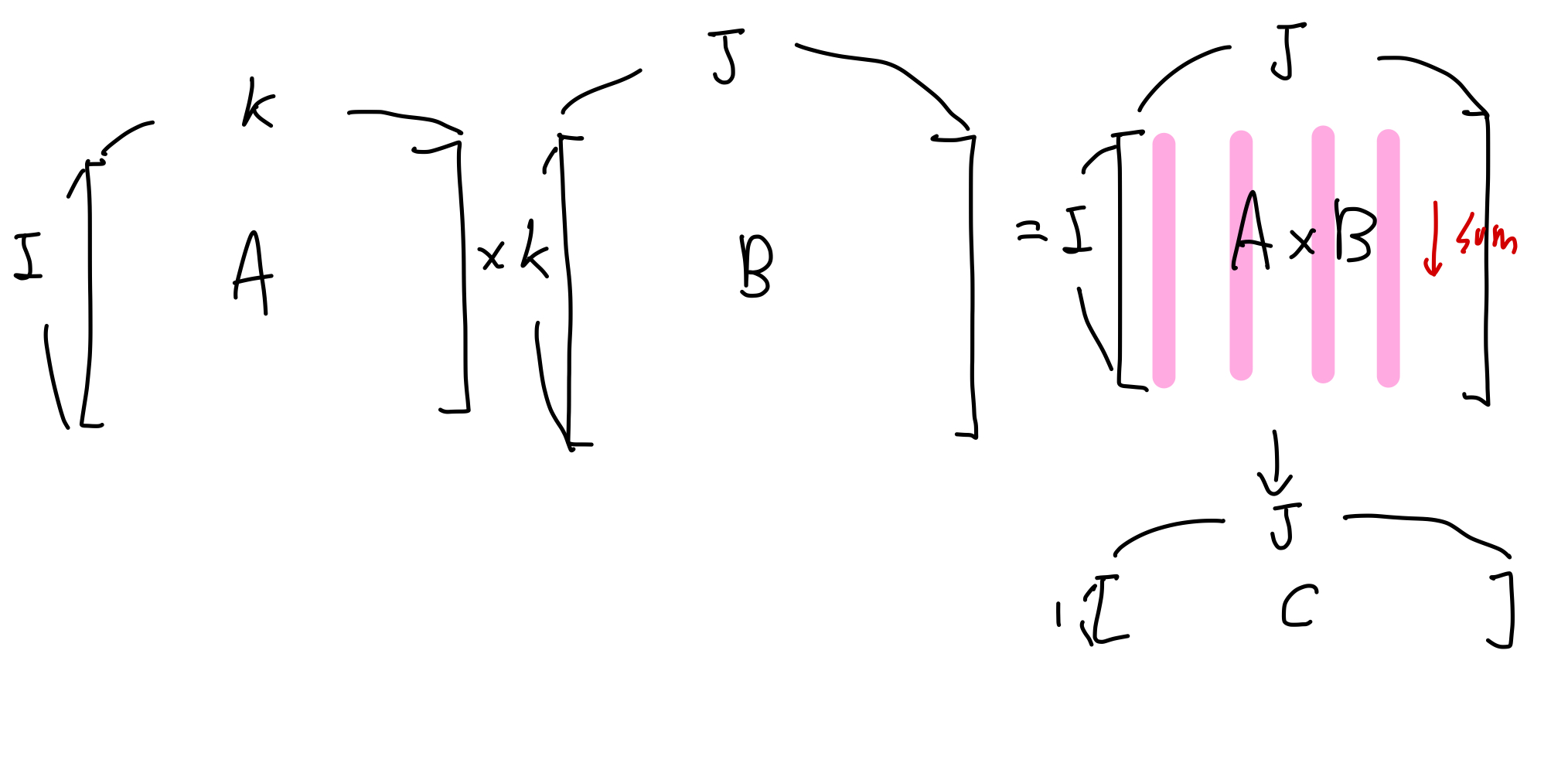

torch.einsum 400x slower than numpy.einsum on a simple contraction · Issue #10661 · pytorch/pytorch · GitHub

Understanding einsum for Deep learning: implement a transformer with multi-head self-attention from scratch | AI Summer